Translation - Machination

Christine Mitchell and Rita Raley edited a special issue of Amodern in 2018. It’s titled “Translation-Machination,” and in the introduction they write: “we simply still care about language as a particular kind of medium–and in our contemporary computational environments, questions about what language is, how it moves, and how it is used, manipulated, maintained, transformed, and understood seem more pressing than ever before.”

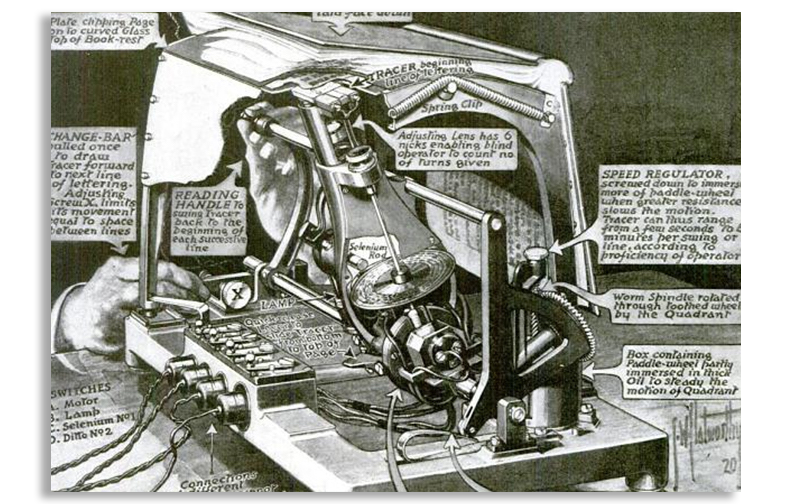

With Tiffany Chan and Mara Mills, I contributed to this special issue. Our essay focuses on optophonics (the conversion of type into audible tones) as a form of transcription for blind readers during the first half of the 20th century. Our case study for optophonics is Mary Jameson’s widely ignored contributions to what became optical character recognition (the conversion of page images into machine-readable text), and our approach combines Mara’s archival research with the MLab’s prototyping techniques for 2D to 3D translation. The essay also includes some historical audio and demonstrations that Mara digitized, together with braille letters transcribed by Shafeka Hashash. We stress throughout the piece the importance of “suboptimal design” to prototyping and media history. Such design privileges attention to maintenance and negotiation over novelty and automation.

The essay, titled “Optophonic Reading, Prototyping Optophones,” was the MLab’s first co-authored piece with a researcher at another institution. Tiffany and I learned a tremendous amount from Mara and her archival work, and Christine and Rita’s special issue was a perfect fit for the collaboration. The entire issue is excellent. It features work by Christine and Rita as well as Otso Huopaniemi, John Cayley, Avery Slater, Quinn DuPont, Andrew Pilsch, Nick Montfort, Jane Birkin, Karin Littau, and Joe Milutis.

A link, abstract, and acknowledgments for “Optophonic Reading, Prototyping Optophones” (open access) are below. I also point to a 2021 talk at Lawrence Technological University where I applied this research to the topic of worldbuilding. Many thanks to Tiffany and Mara for writing with me, and to Christine and Rita for their editorial work.

Optophonic Reading, Prototyping Optophones

Published in Amodern 8 (Mitchell and Raley, eds.) in 2018 | written with Tiffany Chan and Mara Mills | 5,223 words plus twelve figures, including one video and two audio files | open access

Links: essay (HTML); the reading optophone (HTML); MLab research on optophonics (HTML); talk at Lawrence Tech with slides (HTML); resources related to “Types of Prototypes” and “Critical Design” (HTML)

This article details the contributions of blind readers to the development, design, and marketing of the optophone, a text-to-tone transcription machine introduced in the early twentieth century. We combine archival research with prototyping to investigate the dimensions involved in past coding and decoding practices. If archives provide testimonial fragments about individual use, 2D to 3D translation helps scholars to more broadly characterize optophone reading and understand technical affordances.

Acknowledgments: Katherine Goertz (UVic), Evan Locke (UVic), Danielle Morgan (UVic), and Victoria Murawski (UVic) contributed to early iterations of this research, which was supported by the Social Sciences and Humanities Research Council of Canada, the Canada Foundation for Innovation, and the British Columbia Knowledge Development Fund. A repository of files for prototyping a reading optophone with historical context is available at github.com/uvicmakerlab/optophoneKit. Archival research and digitization by Mills was supported by U.S. National Science Foundation Award #1354297, with thanks to Harvey Lauer for materials from his personal archive, and Shafeka Hashash for braille transcriptions. Early drafts of this piece appeared on the Digital Rhetoric Collaborative blog and at the “Histories of Digital Labor” panel organized by Shawna Ross for the 2017 Modern Language Association (MLA) convention.

Illustration of an optophone’s features, care of Scientific American (Nov. 1920, from “How the Blind May Read with Their Ears”), in the public domain. I created this page on 25 June 2019 and last updated it on 24 June 2024.